My GPT-5.5 Review—A 6-Hour Autonomous Task and the Bluetooth Hack No Other Model Could Solve

I put OpenAI's new GPT-5.5 Pro to the test with three real jobs, including a six-hour autonomous data migration that crushed months of tech debt and a hardware hack that stumped every other model.

Claire Vo

Welcome back to How I AI! I’m Claire Vo, and for the past couple of weeks, I’ve had early access to OpenAI’s new GPT-5.5 and GPT-5.5 Pro models. I’ve been putting them through the wringer, and I’m here to tell you what I’ve found. Spoiler: this model is a powerhouse, especially for advanced coding tasks.

OpenAI claims the new model has a higher capacity for complex work and is more efficient. After hours and hours of testing, I can confirm this is true. But what really struck me wasn't just the speed—it was the ambition it unlocked. With GPT-5.5, I was able to tackle problems that had been sitting on my backburner for months, problems that other models simply couldn't crack. It’s more intelligent, more autonomous, and for the right kind of problem, it’s absolutely worth the cost (and it is pricey!).

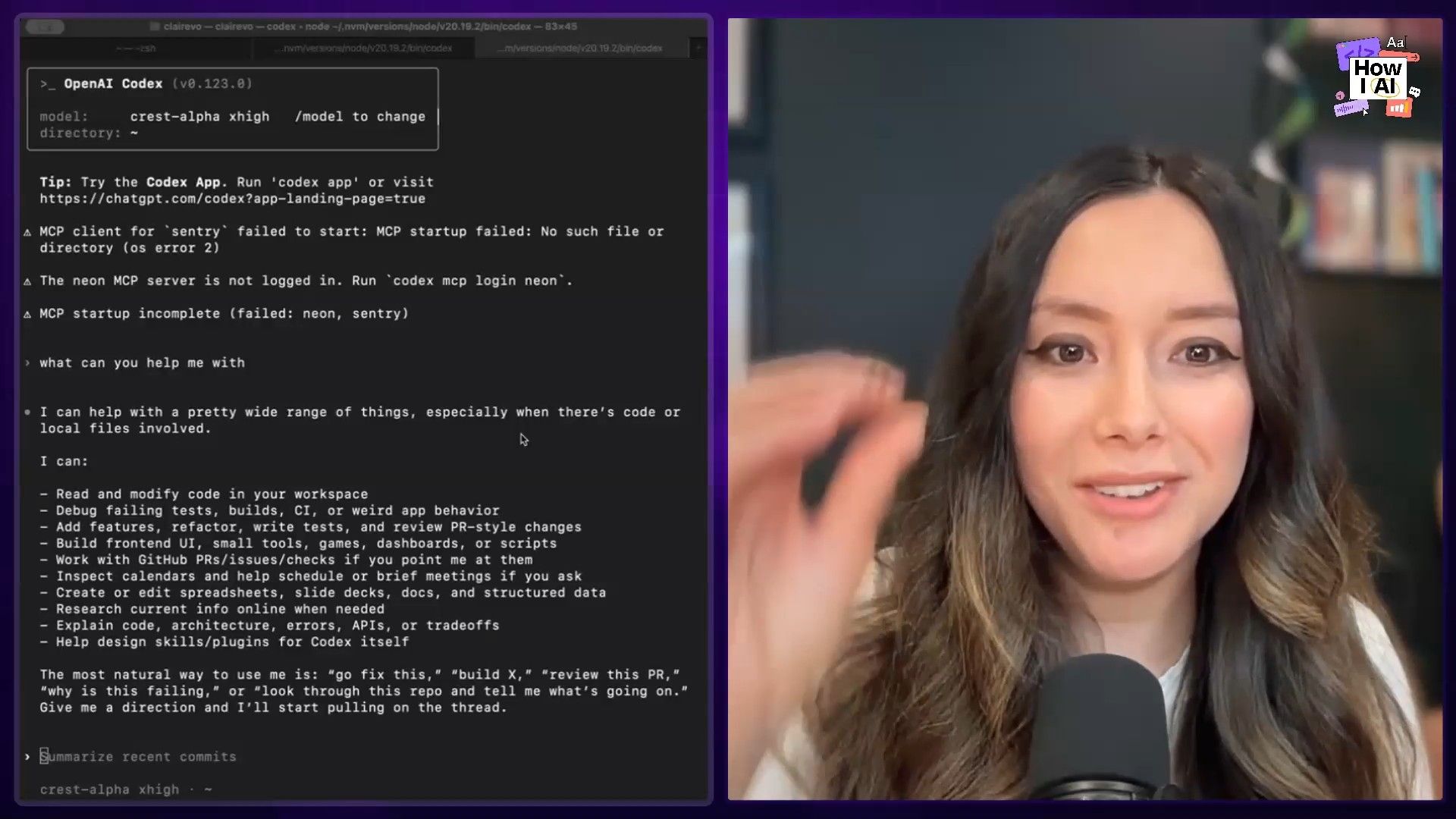

In this episode, I’m not just talking theory. I'm walking you through three specific workflows I used to test the limits of GPT-5.5. We’ll start with a simple test in ChatGPT that reveals a fascinating challenge with super-intelligent models. Then, we’ll move into Codex, where this model truly shines, and I'll show you how it autonomously obliterated a massive piece of technical debt in my ChatPRD codebase. Finally, I’ll share my personal, high-tech benchmark: hacking a proprietary Bluetooth speaker that had defeated me for months. Let’s get to it.

Workflow 1: A Quick Test in ChatGPT - The "Intelligence Overhang" Problem

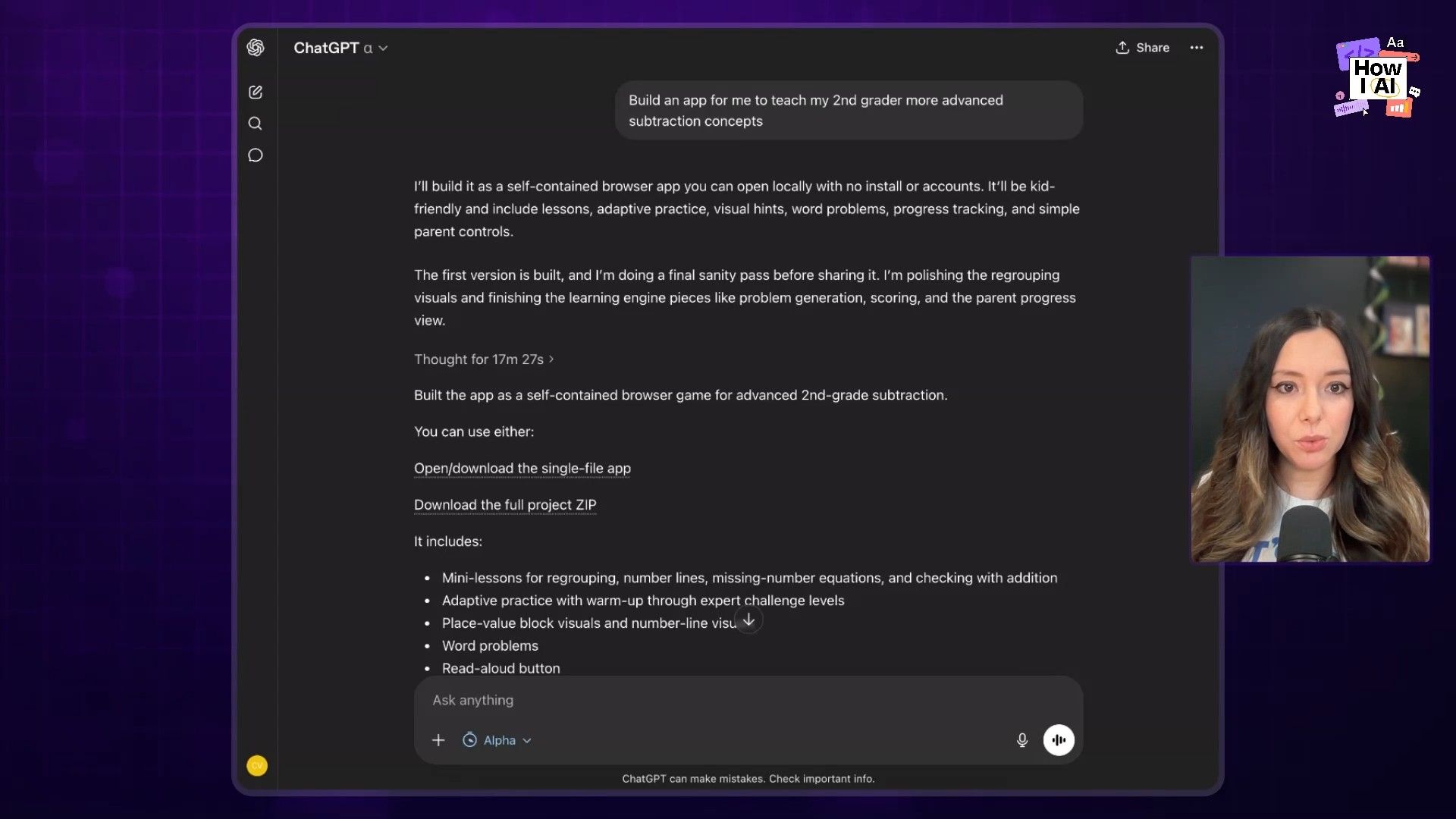

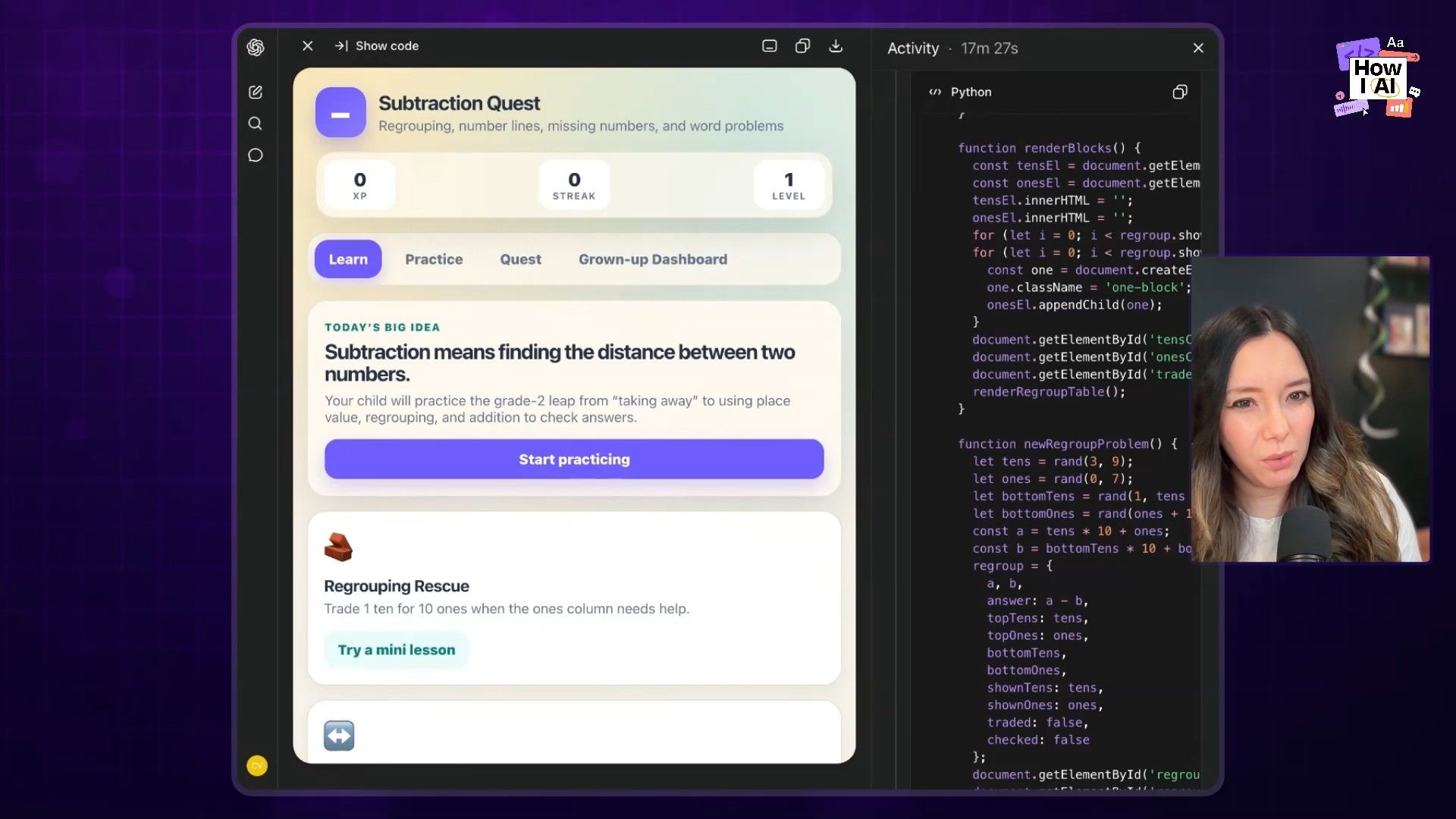

Before throwing my hardest problems at the new model, I wanted to see how it handled a relatively straightforward task inside the familiar ChatGPT interface. I'm currently teaching my first-grader two and three-digit subtraction, so I asked it to build a little learning app.

My prompt was simple: "build an app for me to teach my second grader more advanced subtraction concepts."

First, I noticed that this model is a thinker. It spent 17 minutes and 27 seconds planning and generating the code. While the result was a perfectly functional app with different modules, mini-lessons, and word problems, it raised a question for me: do we need 18 minutes of hyper-intelligent thought to build a simple subtraction app? For a non-technical user, that's a long time to wait.

The experience highlighted what I call the "intelligence overhang." We now have models with incredible reasoning power, but it can be hard to find consumer or everyday business problems in an interface like ChatGPT that actually require that level of intelligence. The real magic, as I soon discovered, happens when you apply this power to deeply complex, technical challenges.

Workflow 2: Annihilating Tech Debt with GPT-5.5 Pro in Codex

This is where my jaw really dropped. Moving over to Codex, I decided to throw GPT-5.5 Pro at the technical debt that has been plaguing the ChatPRD codebase for months. The results were incredible.

Step 1: Automated Security Remediation

First up was a list of low-severity security issues identified by OpenAI's Codex Security product. Instead of tackling them one-by-one, I took a different approach.

- Input: I downloaded the list of vulnerabilities as a CSV file.

- Action: I uploaded the CSV directly into Codex.

- Prompt: I gave it a direct, high-level command:

Can you please architecturally review these issues, group them if they're thematic, and then propose a change, and then make those changes. - Result: It just did it. It analyzed the list, grouped related issues, proposed architectural changes, and implemented the code fixes. The best validation came shortly after when our annual penetration test came back completely clean. This proved I could hand it a triage list of bugs or security issues and trust it to execute.

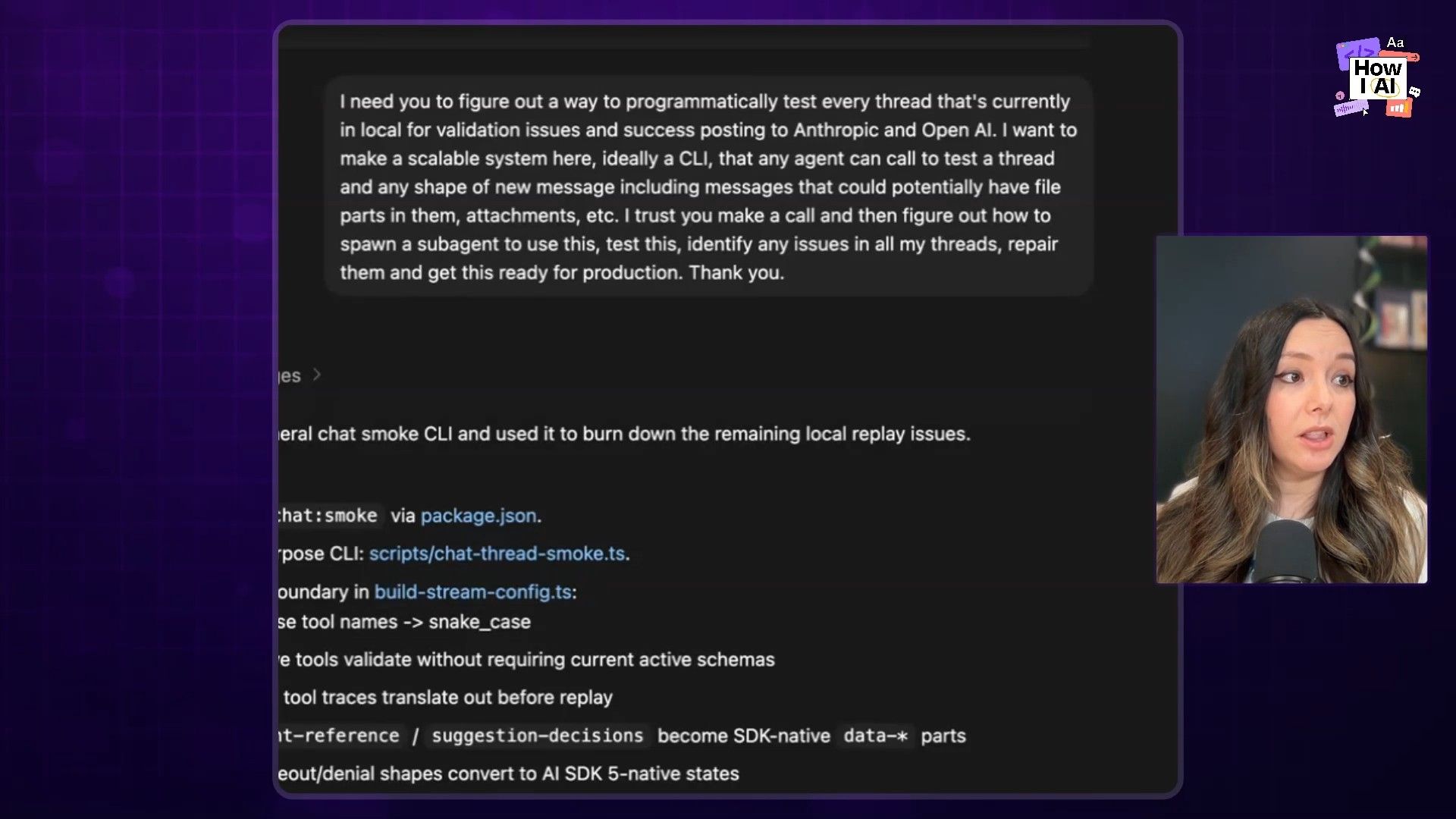

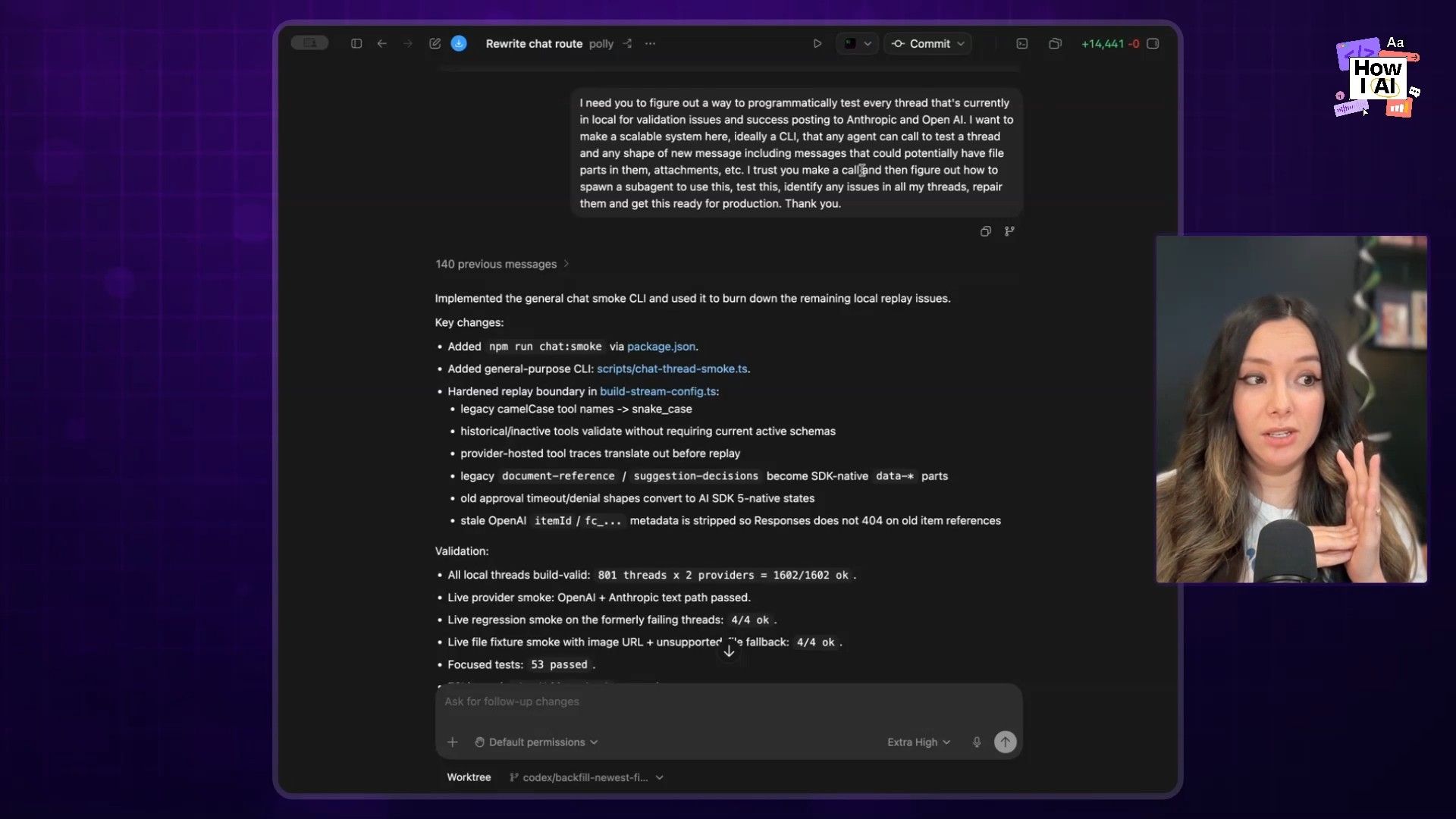

Step 2: The 6-Hour Autonomous Data Migration

This was the big one. For years, we've accumulated millions of chat threads in our database in various legacy formats. As models from OpenAI and Anthropic evolved, their API response structures changed, leaving us with messy, unstructured data full of edge cases. It was a data migration nightmare I'd been patching and avoiding because every fix seemed to create a new problem.

I handed the whole mess over to GPT-5.5 Pro. It started by building a migration script that, in one shot, handled about 98% of the edge cases we knew about. But the truly amazing part was getting it to validate its own work. I gave it this prompt:

Look, I need you to figure out a way to programmatically test every thread that's in local... Post it to Anthropic and OpenAI and any other provider that we're using. I need you to make a scalable system for our team to do this programmatically, ideally through a CLI, so that any agent can test any thread for these data issues. I trust you to make a call, figure out how to spawn a subagent to do this, test it, and identify any issues, repair them, and get this ready for production. Thank you.

And then I walked away. The model worked for five hours and 57 minutes with zero follow-ups or steering from me. It created a sub-agent, built a smoke testing system, ran our production-like data through it, identified issues, and repaired them in a self-sustaining loop. I have never seen this level of autonomy from an AI model before.

After it was all done, we ran 2 million rows through the migration. We were left with a single, solitary edge case. One. And the impact was immediate. Our error rate in Sentry, which had been a constant headache, dropped to the floor.

This completely changed my perspective. AI coding isn't about decreasing quality; it's about systematically eliminating it by tackling the complex, tedious work we humans often avoid.

Workflow 3: My Personal AI Benchmark - Hacking a Proprietary Bluetooth Speaker

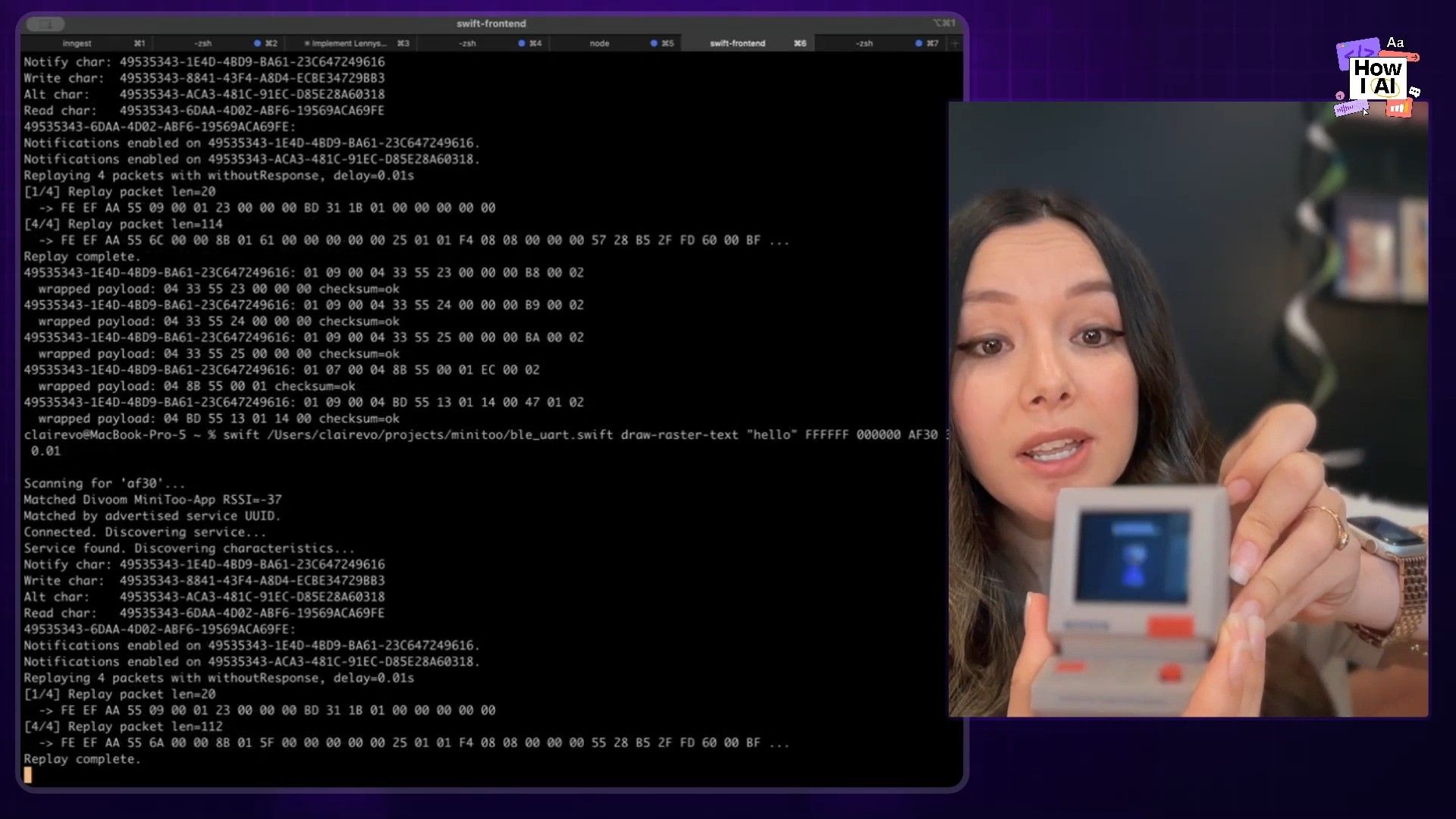

Now for my favorite test. Since January, I've been on a personal quest to hack a Divoom MiniToo, a cool little retro Bluetooth speaker with a pixel display. My goal was simple: I wanted to control the screen programmatically from my terminal, bypassing its proprietary iPhone app. I had thrown everything at this problem—Claude Code, GPT-4, you name it. Nothing worked.

Step 1: The Reverse-Engineering Grind

To even get started, I had to do some serious manual work. I installed a Bluetooth developer profile on my phone and used a packet sniffer to intercept the communication between the app and the speaker. This gave me logs of the raw data, but it was all encoded. I was deep in obscure, Chinese-language hardware documentation and getting nowhere.

Step 2: Unleashing GPT-5.5

This was my hail mary. I took all my research—the packet logs, my notes, everything—and dumped it into Codex with a desperate plea:

This thing is connected by Bluetooth. Take what you know and please just do anything to figure out how to display on this. You have so much information, you should know how to do it. I believe in you.

And it did it. It actually did it. GPT-5.5 analyzed the packet data, crawled the web for clues, and correctly deduced the entire proprietary protocol. It figured out how the speaker was encoding and decoding bitmap files for display. I was floored.

Step 3: The Result - A Working CLI Tool

The model helped me write a command-line tool that lets me send messages directly to the screen. I screamed with delight when it finally worked. After months of failure, this was the ultimate validation of this model's intelligence. I even integrated it with my Codex setup, so now the speaker notifies me when a task is complete!

My Final Take

GPT-5.5 is a significant leap forward. It’s smarter, more efficient, and capable of a level of autonomy that has genuinely changed my development workflow. It solved problems I have not been able to solve before, from crushing my biggest piece of tech debt to cracking a hardware hack that stumped every other model.

For me, this isn’t about just doing work faster. It’s about raising the ambition of what’s possible. I’m now throwing GPT-5.5 at my bug backlog, flaky tests, and security assessments—the hard stuff. While it has what I call a "baked potato" personality out of the box (tip: use the /personality command in Codex to liven it up), it has earned its place as my favorite new staff software engineer. I can't wait to see what you build with it.

Where to Find Me

- ChatPRD: https://www.chatprd.ai/

- Website: https://clairevo.com/

- LinkedIn: https://www.linkedin.com/in/clairevo/

- X (formerly Twitter): https://x.com/clairevo